Building a Codebase for Humans and Agents

Why We Design for Agent Context

Agentic coding has evolved rapidly in recent years. By early 2025, coding agents could explore repositories, propose changes across multiple files, run tests, and debug failing builds. Yet despite these capabilities, they still struggled in production environments. Agents often lacked the necessary context about business logic, internal conventions, and architectural boundaries. When given too little information, they produced shallow fixes. When given too much, they lost focus or pulled in irrelevant details. The biggest limitation today isn’t raw model intelligence, but whether agents have the right context at the right moment.

At Monk, we design our engineering workflow around this principle. We use AI agents to review code, run comprehensive test suites, and accelerate development, but we also structure our codebase so agents can access the context they need. The result is agents that are dramatically more useful in practice.

Rethinking the Codebase for the Agent Era

As AI tooling became a bigger part of our workflow, we realized we needed to rethink parts of the codebase itself.

To increase engineering output and stay at the cutting edge of development, we needed to make context delivery an explicit design goal. That meant reducing ambiguity, tightening domain boundaries, colocating related logic, and documenting the business rules that would otherwise remain implicit. We wanted agents to enter a directory and immediately inherit the local mental model: what entities exist, what transitions are legal, what assumptions must hold, and what neighboring systems they can safely touch.

How Domain-Driven Structure Delivers Context

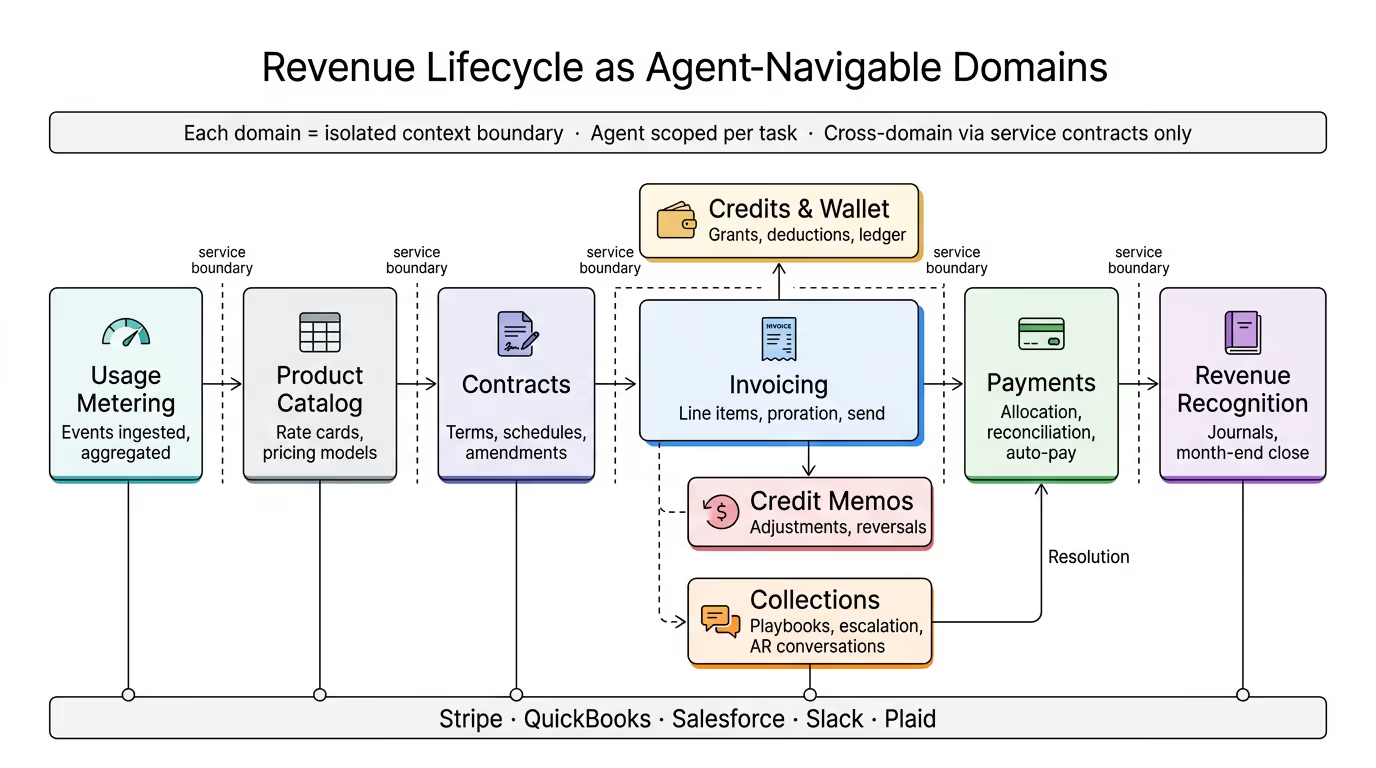

Our backend is organized around business domains. Everything related to a single capability lives together in one directory. Invoicing is one domain. Payments is another. Each integration such as Stripe, QuickBooks, or Salesforce has its own space.

This matters because AI coding agents automatically read instruction files scoped to the directory they are working in. Directory structure becomes a context delivery mechanism.

Each domain includes an AGENTS.md file that captures business knowledge agents cannot infer from code alone: entity relationships, status transitions, invariants, operational constraints, and cross-domain dependencies. In other words, we do not expect the model to reconstruct our business from scattered implementation details. We tell it what matters, close to where that knowledge is needed.

That design choice comes directly from how agentic systems now work. The unit of leverage is not just the prompt. It is the entire context window: system instructions, tools, file structure, retrieved data, message history, and runtime state.

For us, that means codebase structure is not just an organizational preference. It is part of the inference stack.

Context Is King

The reason this matters is simple: LLMs are powerful, but they are not magical. They do not carry perfect working memory through a long coding session. They do not reliably infer hidden business rules from implementation side effects. They do not naturally know which of five similar modules is the one that actually matters.

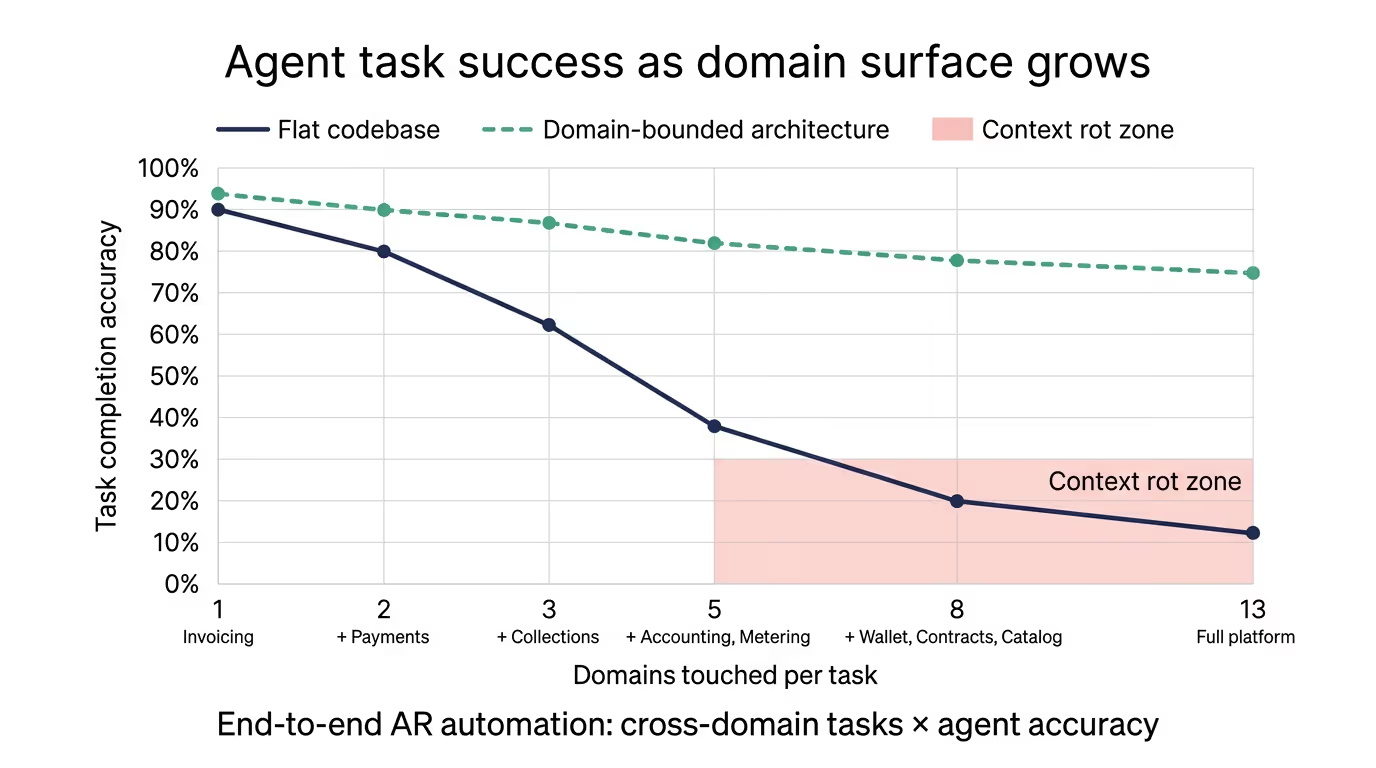

As contexts grow, models can lose focus, degrade in retrieval quality, and exhibit what teams call “context rot.”

Keeping Business Rules Fresh

We are deliberate about where we write the instruction files and where we do not. A stale file is worse than no file at all, because agents can follow outdated rules confidently.

This is another place where the industry learned a lot over the last year. As agents became better at executing multi-step work, the cost of incorrect context went up. A weak model with weak autonomy may fail obviously. A stronger model with stale guidance can fail more convincingly. That is a more dangerous failure mode.

So we treat these files as operational assets, not passive documentation. We have specialized agents that flag stale AGENTS.md files, along with an engineering culture built around accuracy. The goal is not maximum documentation. It is maximum trustworthiness per token.

Building for the Agent-Native Future

The patterns that make code navigable for humans such as clear boundaries, colocated concerns, and documented invariants are the same patterns that make agents effective.

New team members onboard faster because domain knowledge is written down where they need it. Agents perform better because the information they rely on is scoped, current, and close to the code they are modifying. The whole team ships faster because context is never more than a directory away.

As AI tooling becomes a bigger part of how software gets built, we believe the teams that invest in codebase structure will compound their advantage. Benchmark progress is real, and model capability is improving quickly, but raw model performance is only part of the story. The teams that win will be the ones that can consistently give those models the right context, in the right shape, at the right time. At Monk, that is core to how we build.

If you are interested in building at the app-layer and solving hard problems with LLMs in prod - we are hiring