Reinventing contract-to-cash: how AI-native architecture drives real-time results

Finance and post-sales teams have a problem. The most important is the contract because the performance obligations in this contract is what feeds the invoice and the rev-rec. And yet, this process does not get enough love.

A sales rep closes a deal. A PDF lands in someone's inbox. And then begins the ritual: someone opens the contract, squints at the payment terms, manually types numbers into a spreadsheet or ERP, cross-references product names against a rate card, builds an invoice, sets up a billing schedule, and hopes they didn't miss the clause on page 14 that changes pricing after month six.

This process is how billions of dollars in B2B revenue gets operationalized. It is slow and error prone.

At Monk we set out to solve this problem. Since 2025 we've been pushing what is possible in our contract extraction, billing engine, and contract lifecycle management engine. And after hitting another technical breakthrough this month, we'd love to share the latest.

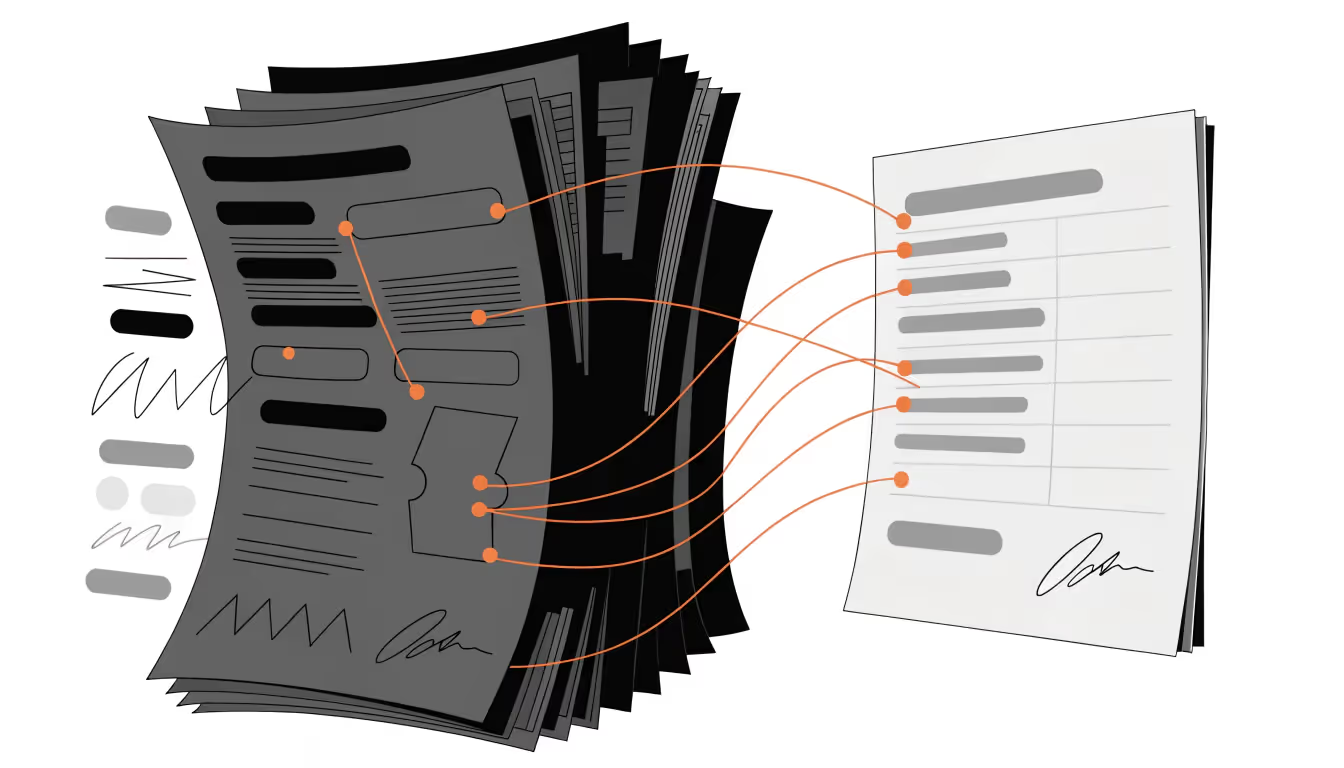

What "Document Extraction" Actually Means in the Context of Contract-to-Cash

When most people hear "document extraction," they think optical character recognition (OCR). Scan a document, pull out text, maybe grab a few fields. It's what companies have been doing since the 1990s with varying degrees of accuracy and approximately zero understanding of what the text actually means. There are a # of issues with this approach.

We've cycled (and continue to evaluate) through a # of approaches from Tesseract to every frontier model (often trying multiple models in bursts) to combining multiple approaches to internal tooling.

What Monk does is fundamentally different.

We don't just extract text from contracts — we comprehend them. Our system reads a contract and identifies the specific elements that matter for billing: contact information, performance obligations under ASC 606, pricing structures (including tiered, usage-based, and hybrid models), payment terms, renewal clauses, and amendment conditions. Then it matches those obligations against your existing product catalog to generate invoices and billing schedules automatically.

The distinction between extraction and comprehension matters. A traditional OCR system can tell you there's a number on page 3. Our system knows that number is a per-unit price for a specific performance obligation that starts billing on a different date than the rest of the contract, with a 3% annual escalator buried in an addendum.

The Architecture: Frontier Models, Eval Pipelines, and Guardrails

We don't use a single model. We use an ensemble of frontier models, each selected for what it does best, orchestrated through a pipeline that prioritizes accuracy over speed. Although from speed POV - we do offer what we'd like to think is fast extraction with <2min delay.

Why Multiple Models

Contract language is adversarial by nature. It's written by lawyers to be precise in ways that are hostile to casual reading. A single model, no matter how capable, will have blind spots. On top of that speed matters in some cases. And so we use some models for reasoning, some for raw extraction, others for amendments, and yet others as fallback.

By routing different extraction tasks to the models best suited for them, we get compound accuracy that no single model achieves alone.

Evals as a First-Class Citizen

Here's where most teams building on LLMs get it wrong: they treat evaluation as an afterthought. Ship a prompt, spot-check a few outputs, call it done. That approach is fine for a chatbot. It's catastrophic for financial data.

We use Braintrust as our eval and observability layer. This is not optional infrastructure. Evals are the backbone of our release process. Every change to our extraction pipeline (whether it's a prompt adjustment, a model swap, or a new document type ) runs against a comprehensive evaluation suite before it touches production.

What this looks like in practice:

- Regression testing on real contracts. We maintain a growing dataset of annotated contracts spanning dozens of industries and commercial structures. Every pipeline change gets scored against this dataset. If extraction accuracy drops on any contract category, the change doesn't ship.

- Continuous production scoring. Braintrust scores production traffic asynchronously as it flows through, so we catch degradation in real time without adding latency. When a frontier model provider ships an update that subtly changes how their model handles nested tables, we know within hours, not weeks.

- Failure-to-test-case conversion. When something goes wrong in production (and with LLMs, things will go wrong) we convert that failure into a permanent test case with one click. Our eval suite only grows. It never shrinks.

This is the part that's hard to replicate. The models are available to anyone. The eval infrastructure that makes them reliable for financial data is not.

Guardrails That Actually Protect Our Customers

LLMs hallucinate. This is not a solvable problem at the model level. We see it a managed risk problem at the system level. Our guardrails operate at multiple layers:

Structural validation. Extracted data must conform to expected schemas. If a contract has three line items but the model returns four, that's caught before it reaches your billing system.

Cross-field consistency. If the total contract value doesn't equal the sum of individual obligation prices, something is wrong. We check.

Confidence scoring with human-in-the-loop routing. Not every extraction is equally confident. When our system encounters ambiguous language — and contracts are full of it — it flags the specific fields that need human review rather than guessing. The key insight: it's better to say "I'm not sure about this clause" than to silently generate a wrong invoice.

Deterministic business rules layered on top of probabilistic extraction. The LLM extracts. Deterministic logic validates. This hybrid approach gives us the flexibility of AI with the reliability of traditional software where it counts.

Deeper Look Into The Full Contract Processing Pipeline

Most "extraction" tools stop at extraction. They hand you a JSON blob of fields and wish you luck. Monk runs the entire pipeline — from document ingestion to invoice generation — with input from a human only to validate the data.

And again, we do it near real-time. No need to wait 24 hours.

Multi-Source Ingestion

Contracts arrive from wherever your sales team works, and Monk meets them there.

Manual upload. Drag and drop a PDF or Word doc directly into Monk. Extraction starts immediately and is typically ready in 2 minutes. No need to wait 24 hours. And of course we run the pipelines 24/7.

Salesforce. Monk auto-syncs opportunities, accounts, contacts, and attached documents. When a deal closes in Salesforce, the contract is already being processed before anyone on the finance team knows about it.

HubSpot. Deals pull in automatically, mapped to contracts with invoice sync built in. No export-import dance between systems.

DocuSign. The moment a signature lands, the document flows into the extraction pipeline. No email forwarding, no downloads.

The point isn't that we integrate with these tools (although we are proud of the integrations).

The point is that extraction triggers automatically from these sources. Documents flow in from wherever your sales team works, and billing schedules flow out without a handoff in between.

And as a result - you get invoice schedules today, not X days from now.

Pricing Complexity Others Won't Touch

Here's where most billing platforms quietly fail: the hybrid deal. A contract that combines a flat monthly platform fee, tiered pricing on API calls, and usage-based overage billing — all in the same agreement.

Most platforms force you to pick one pricing model per product. When a real-world contract combines flat fees, tiered pricing, and usage-based billing in the same document, you're back to manual workarounds, shadow spreadsheets, or splitting the deal into artificial sub-contracts that don't reflect the actual commercial relationship.

Monk handles hybrid pricing natively. The AI extracts each pricing component, classifies it correctly, and generates the appropriate billing schedule for each — monthly invoices for the flat fee, threshold-triggered invoices for usage, milestone-based invoices for services — all from a single contract, all automated.

Invoices That Write Themselves

Extracted contract terms flow directly into payment schedules, and invoices auto-generate at the right time. No human builds the invoice. No one sets calendar reminders for billing dates. The system knows when each obligation should be billed and acts on it.

Mid-period changes trigger automatic proration. If a customer upgrades halfway through a billing cycle, Monk calculates the credit and the new charge without anyone opening a calculator. We will also show you our work in the Monk platform (as always). Usage-based line items match meter events to the correct invoice automatically — no manual reconciliation of consumption data against billing records.

Amendment Intelligence

This is the feature that makes finance teams emotional.

Upload a contract amendment, and the AI parses exactly what changed relative to the original agreement. Not a full re-extraction — a differential understanding. It knows the amendment increased the per-seat price by 12% but didn't touch the implementation milestones. Existing invoices get adjusted. Credits issue where needed. Prorations apply automatically. No manual recalculation. No "let me re-read the original contract and figure out what's different."

In most billing systems, amendments are where things break. In Monk, they're just another document entering the same pipeline.

Real-Time Extraction: No More Waiting 24+ Hours

When we say "real-time," we mean it literally. A contract is uploaded — or synced from Salesforce, HubSpot, or DocuSign — and extraction begins immediately. Not queued. Not batched. Not "processing, check back in X days."

Our system works real-time at the speed of your business.

What Teams Did Before Us

The honest answer: a combination of manual data entry, fragile templates, and waiting.

The spreadsheet era. Finance teams maintained massive spreadsheets mapping contract terms to billing actions. Every new contract meant manual entry. Every amendment meant finding the right row and hoping you updated all the dependent formulas. Contract amendments were where accuracy went to die.

The rules-based automation era. Some teams graduated to CPQ or billing platforms with rules engines. Better than spreadsheets, but these systems could only handle contracts that fit predefined templates. The moment a contract had non-standard terms — which is most contracts — someone was back to manual entry. These tools automated the easy 40% and left the hard 60% untouched.

The offshore BPO era. Larger companies outsourced contract processing to business process outsourcing teams. This "solved" the problem by throwing labor at it. Turnaround times of 24-72 hours were considered acceptable. Error rates of 5-10% were considered normal. And the process only operated Monday through Friday, business hours, because it was human-powered.

Monk replaces all of this. No queue. No batch window. No business hours constraint. A contract signed at 11 PM on a Saturday generates a billing schedule before midnight.

What Real-Time Unlocks

The speed itself is useful, but the second-order effects are transformational:

Cash acceleration. If your first invoice goes out 3 days faster on every deal, and you close 50 deals a month, you've just moved your entire cash collection curve forward by 3 days. For a growing company, that's not a nice-to-have — it's the difference between funding the next quarter from operations or funding it from your credit line.

Error reduction through immediacy. When extraction happens in real time, discrepancies surface while the deal context is still fresh. The sales rep remembers the negotiation. The customer remembers what they agreed to. Catching a billing error in hour one is a quick fix. Catching it in month three is a dispute.

Continuous close, not month-end panic. When every contract is processed as it arrives, there's no backlog to clear at month-end. Revenue recognition is current. Deferred revenue schedules are accurate. The finance team's relationship with the calendar changes entirely.

Scale without headcount. This is the one that matters most for growing companies. The traditional model requires roughly linear scaling — more contracts mean more people processing them. Monk breaks that curve. We've seen teams handle 3-5x contract volume growth without adding headcount to their billing operations.

Select Technical Decisions That Make Monk Better

A few choices we made early that turned out to be load-bearing:

Schema-first extraction. We don't ask the model "what's in this contract?" open-ended. We define precise output schemas — performance obligations, pricing, terms, counterparty info — and constrain the model to fill them. This dramatically reduces hallucination and makes downstream processing deterministic.

Document chunking strategy. Contracts aren't processed as monolithic blobs. We segment them intelligently — by section, by clause type, by reference structure — so each model call operates on focused context. This improves accuracy on long documents where full-context processing degrades.

Model-agnostic orchestration. We're not married to any single model provider. Our orchestration layer can route to different models based on document type, extraction task, and observed performance. When a new frontier model drops that's better at table extraction, we can integrate it and validate it through our eval suite in days, not months.

Two Worlds: With Monk and Without

The easiest way to understand what we've built is to trace a single contract through both realities.

Without Monk

A $180K annual SaaS contract closes on a Friday afternoon. It has three performance obligations: a platform license billed monthly, an implementation fee billed on milestone completion, and a usage-based overage component that kicks in at a threshold defined in Exhibit B.

Here's what happens. The contract sits in a DocuSign completion email over the weekend. Monday morning, someone on the finance team downloads it. They open the PDF and start reading. They spend 20-40 minutes identifying the billing terms, cross-referencing the product names against the rate card, and manually entering everything into the ERP. They may or may not catch the overage clause in the exhibit. They set up three separate billing schedules by hand. The first invoice goes out Wednesday — if nothing else was more urgent. If the contract has an amendment from the negotiation that changed the implementation milestones, there's a coin flip on whether that makes it into the billing system correctly.

Total elapsed time from signature to first invoice: 3-5 business days. Error probability on complex terms: meaningful. Weekend and holiday coverage: zero.

With Monk

Same contract. Signed Friday at 6 PM. By 6:0 PM, Monk has ingested the document from DocuSign, extracted all three performance obligations, matched them to the correct product plans, identified the usage threshold in Exhibit B, flagged the amended implementation milestones, and generated a draft billing schedule. A human reviews the flagged items — the ambiguous milestone language gets clarified in 5 minutes, not discovered 45 days later during a billing dispute. The first invoice is queued before the sales rep has closed their laptop.

Total elapsed time: minutes. Coverage: 24/7/365. The contract volume could 5x tomorrow and the process wouldn't change.

This isn't an incremental improvement to an existing workflow. It's a different workflow entirely.

Why "AI-Enabled" Is Not the Same as AI-Native

There's a wave of legacy billing and AR platforms bolting AI features onto architectures designed in the 2010s. It's worth understanding why that approach has a ceiling.

The Bolt-On Pattern

A typical legacy platform was built around templates, rules engines, and structured input forms. The core assumption: a human reads the contract and enters data into predefined fields. The system then automates what happens after data entry — generating invoices, sending reminders, tracking payments.

When these platforms "add AI," they're usually doing one of two things. First, they add an OCR layer that pre-fills their existing input forms, saving the human some typing but still requiring them to verify every field. The AI is an input accelerator, not a reasoning engine. Second, they add a chatbot or copilot that answers questions about data already in the system. Useful, but it doesn't change how data gets into the system in the first place.

In both cases, the fundamental architecture hasn't changed. The human is still the bottleneck. The AI is a faster pencil.

The AI-Native Pattern

Monk was designed from day one with the assumption that a machine reads the contract. This changes everything about the architecture.

The data model is richer. Because we're not constrained by what a human can reasonably type into a form, we extract and store the full semantic structure of a contract — every clause, every condition, every cross-reference. A bolt-on system stores what a human decided was important enough to enter. We store everything the contract says.

Confidence is a first-class concept. Every extracted field carries a confidence score. The system knows what it knows and what it doesn't. Legacy platforms with bolt-on AI treat extraction as binary — either it fills the field or it doesn't. There's no concept of "I'm 94% sure this is right but you should look at this clause." That nuance is the difference between a tool that needs constant supervision and one that earns trust over time.

Contract amendments are a solved problem, not a disaster. In legacy systems, amendments are the single biggest source of billing errors because they require a human to re-read the original contract, understand what changed, and manually update multiple fields. In Monk, an amendment is just another document. The system reads it, understands how it modifies the original obligations, and updates the billing schedule accordingly. Same pipeline. Same guardrails. Same speed.

The Compounding Gap

The difference between bolt-on and native widens over time. A bolt-on system improves when the vendor ships updates. An AI-native system improves continuously — from model upgrades, from eval suite expansion, from production feedback, from every contract it processes. After 12 months, a bolt-on system is roughly where it started. After 12 months, Monk has seen thousands of your contracts and knows your billing patterns better than your team does.

This is the real moat. Not the models — those are available to everyone. Not the UI — that's table stakes. The moat is the compound learning that happens when AI is the foundation rather than a feature.

To sum:

Monk uses frontier AI models to extract pricing, obligations, and billing terms from contracts in under 2 minutes — then automatically generates invoices, handles amendments, and manages hybrid pricing complexity that other platforms can't touch. Built AI-native with an ensemble of specialized models, eval pipelines on Braintrust, and multi-layer guardrails, the system comprehends contracts rather than just scanning them, improving from every document it processes.

The result: finance teams go from 3-5 day manual turnarounds to real-time, 24/7 contract-to-cash automation without adding headcount.

Book a demo here to learn more!